Is this my moral tech/AI manifesto?

Contents

Ethical Impacts of AI

We could spend an eternity comparing the levels of evil we commit every day. It’s a slippery slope to truly define the threshold of harm reduction in our personal lives, and it’s even harder to control in our professional lives.

The formula is: We need to commit harm on something/someone. What was the justification? Was it worth it? What/Who determines if it is worth it?

- We need to kill animals/plants to eat. Do we consume/harvest what we kill responsibly?

- We discovered fire to cook more nutrient-rich foods, but also found that fire can be a harmful tool when used improperly. Are we using this tool responsibly?

- We used violence to exploit our own species’ labor, and continue to do so with varying levels of nefariousness. Is there anything responsible about that?

- We dug up the long-dead bones of ancient creatures to power cars, planes, buildings, and infrastructure. In doing so, we disrupted plant and animal habitats, our own human communities, and the speed of global temperature shifts. What did we do that for? Was the goal to see how much information we could gather about the planet before destroyed it in the process?

Eating a beef burger has a larger carbon footprint than 500 AI prompts. Regardless, when we make a choice to do either of those actions, what purpose does it serve in comparison to the harm it causes? Do we value the life of a tree that can live thousands of years as much as that of another human?

Regardless, that comparison is a distraction. The physical infrastructure of The Internet and Cloud Storage / Data Centers is the truly disastrous culprit. These disrupt ecosystems, pollute already under-resourced communities, and insidiously transfer costs AND environmental blame from corporations to residents. AI is not the only enemy, it’s simply the latest weapon. We don’t think about purchasing and using tech as purchasing and using knives or guns, but it’s the same. We just don’t see the blood when we use our computers and phones at home.

If environmental impacts by data centers affect reproductive health, are we able to fully grasp the impact of our actions on future parents and generations who we may never know? If we moved all data centers to another country, would we able to fully grasp the impact of our actions on the people of that country whose daily struggles we may never know?

And when is enough enough? This applies to both below scenarios:

- You’re told by your employer to use a new tool. To use the tool, you need to step on a child’s back. Allegedly this will help you help many other children.

- You have 24 hours in a day to fight for reproductive justice and live your life. How much of your life energy can you realistically dedicate to this one cause? What about other causes? Are you doing enough? When is it enough? When are you enough?

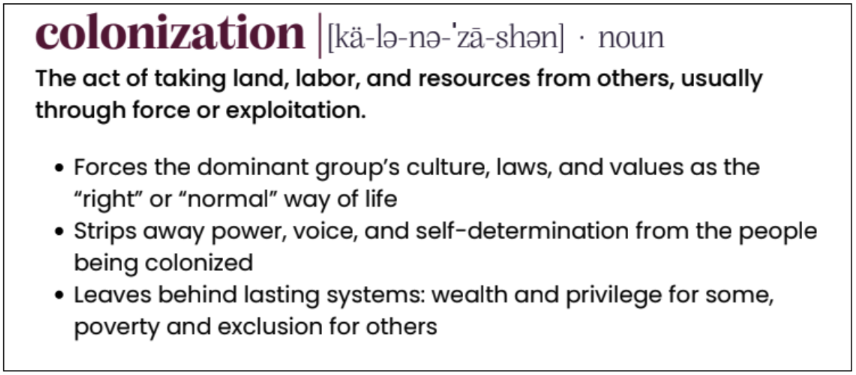

Tech Colonization

When we think about American history, we think about how yt ppl colonized what we know as Mexico, how yt ppl colonized what we know as the US, how yt ppl colonized what we know as Canada.

Are we in the stage yet where we realize Tech Companies led by technofascist yt men have colonized all major nation states globally? They may have kneeled before the current administration, but certainly the administration is simply a stepping stone for them.

source: Presentation by Carpenter Nonprofit Consulting and By the Brujas, LLC

“I know as an Indigenous person that my healing is contingent on the oppressor also being a part of that healing process. I can heal on my own. But, so far, we can’t heal as a community at large until we are all engaged in [decolonization].” — Edgar Villanueva, author of Decolonizing Wealth |

Tech Coercion

| Some of the common arguments to advocating for AI use | My personal counterarguments |

|---|---|

| We don’t want to be left behind. | I think this is a valid point, but it also ignores the power we have as a consumer collective to RESIST the status quo. Humans are generally resistant to change. In a society that is practically religiously obsessed with Progress™️, it is very challenging to resist what they purport as change for the common good. No one wants to be Debbie Downer, and no one wants to admit that maybe she was right all along. |

| We have to become part of the customer base in order to become a stakeholder to shift the company’s direction and approach to AI ethics and responsibility. | I think this is a completely invalid point. We’re all stakeholders in the tech industry at this point - what good has that done us so far? Did we change the mind of the rightwing CEO of Salesforce? Did we prevent Microsoft from deeply cutting nonprofit discounts? We want to prevent the creation of additional data centers. How can we do that by using technology that creates additional need for more data centers? Granted, it’s largely not our consumer use that creates the need - it’s corporations, governments, militaries, etc. But they’ll certainly shift the blame to consumers. |

| We are already under-resourced and this would help us create capacity. | I think this is a valid point, but I don’t think that AI literacy OR the product itself is READY in most cases for consumer use. I think AI could potentially help us create capacity if we were WILLING TO GIVE UP certain things. Ideally, we will wait a bit longer and see how the data privacy, corporate transparency, and functionality situations evolve. |

| This is just another new tool to learn and adapt to, like the camera or the car. | But maybe we shouldn’t have even started using cars? It expanded the speed and distance of our individual reach, all while decreasing the viable land to live on, water to drink, air to breathe… The framing is always, “humanity has always had to adapt to changing technologies, and this is no different,” but never, “humanity keeps creating technologies without considering harm reduction to humanity itself and the health of the ecosystem in which it requires to survive.” |

| The environmental effects are not as bad as many other things we do | Just because we’re doing bad things, that doesn’t mean we should heap more bad things onto the pile. |

| We’ve already been using this tool, why complain now? | We often experience situations where we don’t realize harm is being committed (to others or even to ourselves). Once we later have the consciousness, language, and strength to acknowledge that harm was done, we should say something, and we should change the status quo that allowed that harm to happen in the first place. |

| AI will help us solve the problems that AI is creating | TBD and yes, I would like to remain optimistic, but instead I remain doubtful as the previous line of thought was that technology would help us solve the problems it created. Look where that got us. |

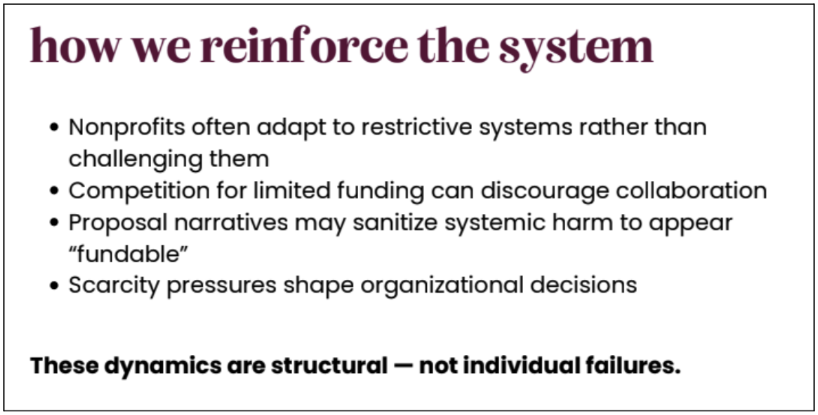

source: Presentation by Rosica Communications and NewBridge Cleveland

Who makes the decisions?

For nonprofit organizations, the decision making is typically made by the board and Executive Director(s).

As employees and people working together for a united cause, we can have open discussions about our work culture, our personal beliefs, and the intersections to influence decision making.

The people we work with and the ones we serve can also influence decision making, though they’re not typically invited to the conversation.

The people who are most harmed by these decisions are often not the same as any of the aforementioned people. Those are largely the people in the global south, though of course many of us feel residual affects to varying degrees.

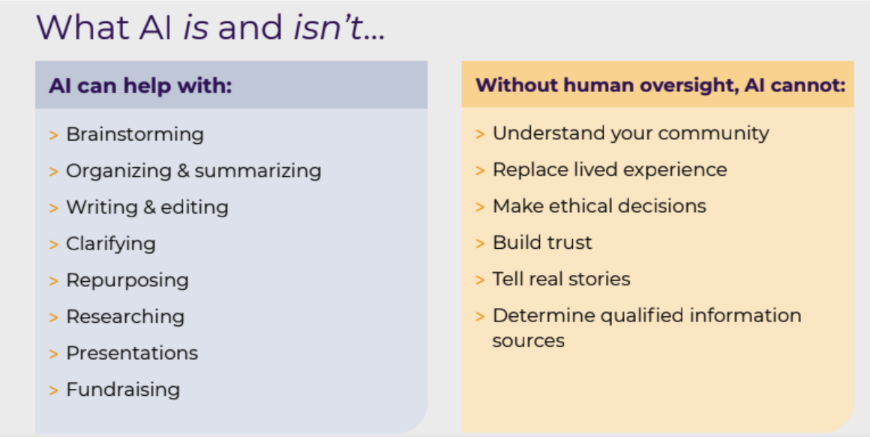

source: Presentation by Witch-Ways | Full Slide Deck

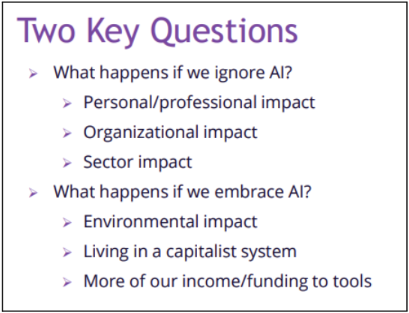

So… do we choose to use AI or not?

In many cases, we don’t get to choose - it’s forced upon us. The tech industry largely doesn’t care about consent.

In some ways, it’s useful. In some ways, it’s frivolous. In all ways, it’s harmful, but that can be applied to almost every action we take, so we must lower our ethical thresholds to survive.

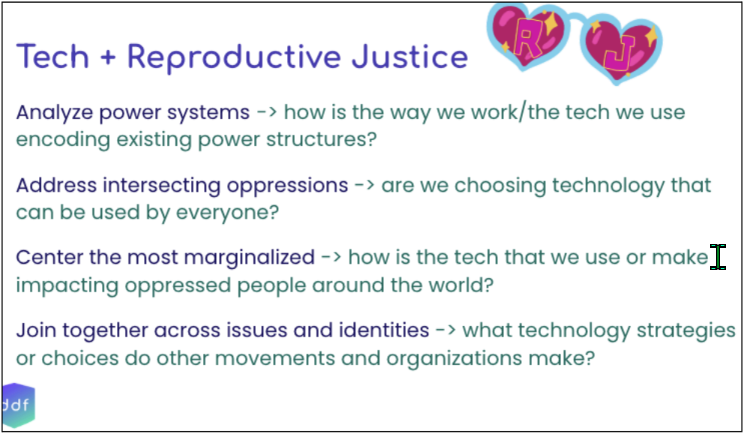

source: Presentation by Techies for Reproductive Justice, a project of Digital Defense Fund

It comes down to:

- DO we need to use it?

- In what specific instances would it be helpful?

- In what ways would it help?

- What data is required?

- Who/what benefits and who/what is harmed?

- How do we justify the harm?

- Does this create legal risk?

- What do their privacy policies, terms of conditions, and data processing agreements say about how they treat data, privacy, and security?

And also consider the concepts covered in Enshittification. Just because things are great now, doesn't mean they will be in the future. This also applies to workplaces! It all depends on who is leading The Change™️ and whether that change is good for everyone or just some...

Addendum

I'm obsessed with the concepts of Calm Tech (though I'm disappointed in how it's been implemented as a certifier of what I think are bougie, wasteful products lol).

When an escalator breaks down, it functionally becomes stairs. When a WiFi-enabled cat food dispenser breaks down, it functionally becomes a $200 piece of junk (also your pets will starve).

And thinking about windows as transparent technology that keeps dust and other things outside of your home. It's fascinating.

But then I think about our era of digital technology and industrial machinery. Big Tech™️markets itself with concepts like, "Save time with automation! Rely on technology to do the work for you! Simplify your life with TECHNOLOGY." They use the bells and whistles of the same product repackaged over and over again to distract us from the reality of tech overcomplicating our lives.

Bells and Whistles Repackaged

When I think about products like Dropbox, Google Drive, SharePoint, etc. They're all the same base product: a file storage and organization system with additional collaboration and sharing functionality. Then they competecoordinate on price markups with each other by introducing new bells and whistles, but their core products are still the same.

With this type of capitalist "competition" we, as consumers, also find ourselves experiencing perpetual choice paralysis. All the products are the same! Better remain loyal to XYZ brand. But wait, XYZ brand just ran out of money! I guess I'll switch to ABC brand. Oh, but they just got bought by Nestle! Wait, are all brands Nestle??

The Reality of Tech Complexity

Working in IT is hard. Tech shifts and evolves every second. New threats are created with every new technology, piece of code, and exhausted human being who simply can't keep up with this unprecedented rate of cultural change.

I don't blame anyone for not being able to keep up, for refusing to keep up, or for giving up completely. It's ridiculous. We're held hostage by 3-year old white boys who made up the game and keep changing the rules. Why are we even still playing this stupid game anyway? Oh, right, the stock markets and war crimes or something. #stonks

What can we do?

I don't fucking know! But here's what I believe.

Change starts with people and cultural shift.

- Kids need to WRECK their parents for maintaining the status quo like massive wankers.

- New parents need to raise cultural assassins and anarchists who believe in mutual aid, kindness, creativity, critical thinking, harm reduction, and learning/unlearning.

- We all need to get the fuck off of social media brain rot, build community and joy locally, stop thinking we always know what's best, and examine what we actually value as individuals (and whether or not our actions align with those values).

- Everyone needs to go to therapy! Therapy needs to be affordable!

I'll come back to this periodically, but will leave you with this for now: A wonderful free poster I got from Outlet in Portland had this great phrase on it: "Build Community, Lean Into Joy, Fight Fascism"

Last updated: 4/19/26